In an audit, documentation variance from provider to provider becomes the DSO's problem.

Inconsistent charting across locations isn't a provider failure. It's a structural problem that surfaces at the worst possible moments: a payer denial, a peer review, a patient complaint. The clinical record is supposed to protect the provider and the organization. Too often, when it's tested, it doesn't.

You already know documentation inconsistency is a growing liability. You also know any tool claiming to fix it will get rejected the moment it adds a step to the workflow. That isn't resistance to manage. It's the operating reality any solution has to work within.

You Can't Train Your Way Out of Documentation Variance. You Need Better Infrastructure.

Payers don't deny claims because notes are poorly written. They deny them because the clinical record lacks reproducible evidence of the finding. A free-text note written after the fact, reconstructed from memory, varying by provider, and unsupported by a standardized artifact, is not a defensible clinical record. It's a liability waiting to be triggered.

Across 50 or more locations, that variability compounds. One provider charts a finding one way. Another charts the same finding differently. When the audit comes, the organization can't demonstrate a consistent diagnostic standard. The clinical record was supposed to protect the provider. Instead, it exposes them.

The cost of inaction is real. Audit time, payer denials, peer review complexity, and legal exposure are all downstream of the same upstream failure: diagnostic capture that's inconsistent, subjective, and unreproducible. Training providers to chart more carefully doesn't fix that. It addresses the output without touching the mechanism.

The mechanism is diagnostic capture itself. When the underlying finding isn't documented at the point of diagnosis with objective evidence any reviewer can evaluate independently, no amount of note quality closes the gap.

What a Defensible Clinical Record Requires at Scale

The DSOs reducing audit exposure and payer denials aren't doing it by retraining providers. They're changing what the clinical record is built from: shifting from provider-reconstructed notes to objective diagnostic artifacts, annotated imaging, timestamped findings, and provider-reviewed documentation generated at the point of diagnosis, not after it.

This is where the clinical record stops being a narrative and starts being an artifact with auditable provenance.

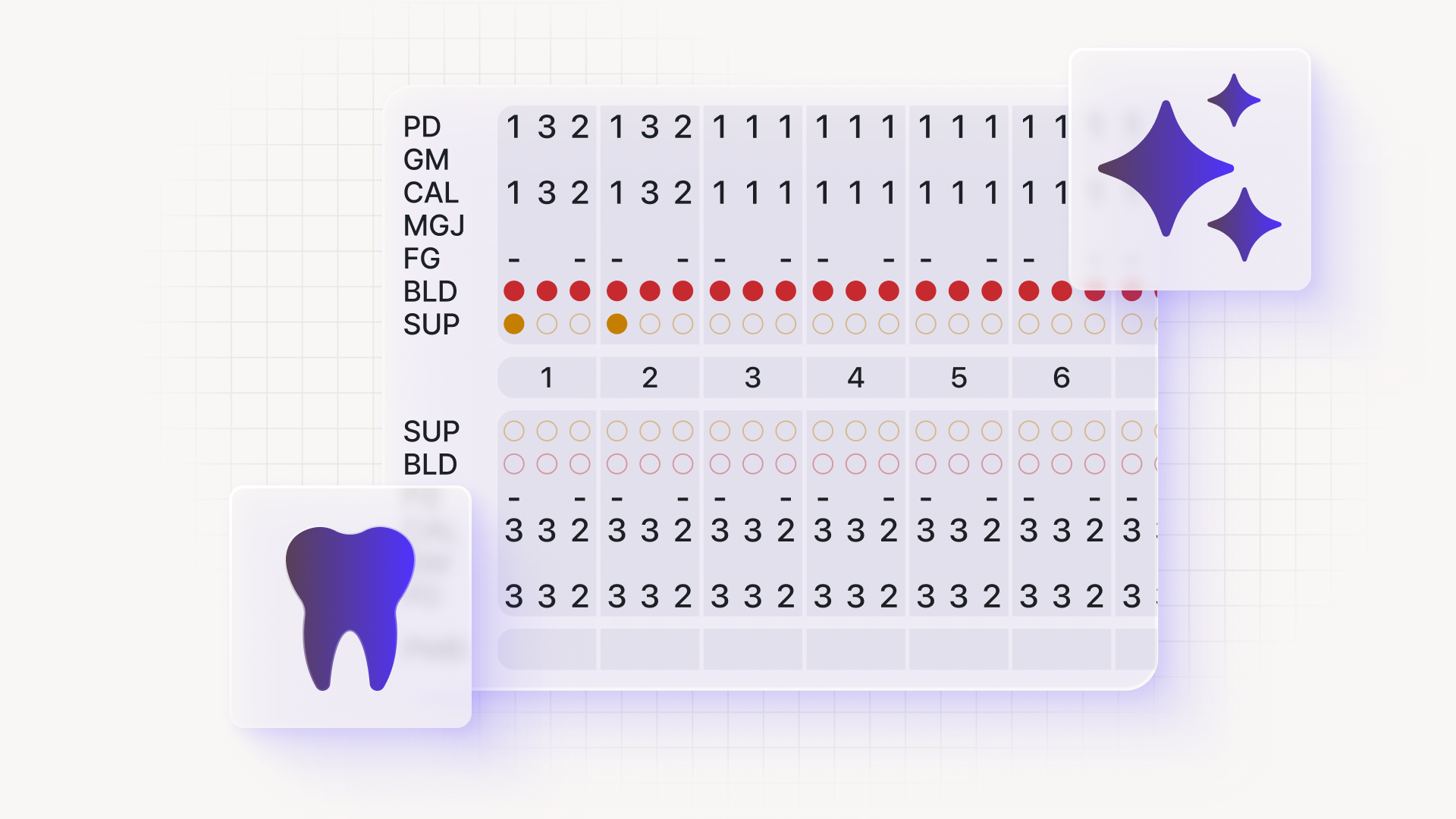

Overjet's Vision AI is built to produce exactly that. It's the only dental AI with FDA clearance for both caries detection and bone level quantification, the two diagnostic categories most frequently challenged by payers and most consequential in peer review and litigation.

FDA clearance answers the threshold question every CCO faces before bringing a clinical tool to providers: is the evidence strong enough that dentists will trust it, and will it hold up when it matters? Clearance means the evidentiary foundation has been evaluated by a body that isn't the vendor. That's what makes AI-captured diagnostic artifacts legally and clinically defensible, not just operationally useful.

The Clinical Evidence That Dentists Trust

In clinical validation, 100% of dentists found more caries with AI. 91% found more periodontal disease.

Vision AI integrates into the existing workflow so AI-captured findings surface at the point of diagnosis, not as a separate documentation step. Provider-reviewed, timestamped notes and annotated imagery are generated as a byproduct of the exam. The provider remains the author of the clinical decision. The AI is the instrument that makes the evidence underlying that decision objective, reproducible, and auditable.

Manually entered notes are actually more legally vulnerable than AI-assisted records. They're reconstructed from memory, vary by provider, and lack objective artifact support. An annotated image with a timestamp and a provider sign-off is harder to challenge than a free-text note written after the fact. The real question isn't whether AI documentation is admissible, but whether your current documentation standard would survive the same scrutiny.

That standard translates directly into governance metrics. Annotated imaging and provider-reviewed notes create an audit-ready record that reduces back-and-forth with payers. The result is 5x faster claims decisions, with clinical rationale embedded in the record.

For COOs evaluating rollout feasibility: daily utilization tracking with same-day intervention means adoption is actively managed, not assumed. The pilot-first model, starting at 3 to 5 locations, means clinical validation precedes any DSO-wide commitment. A 15 to 25% increase in treatment acceptance connects diagnostic quality directly to case acceptance and production per practice.

What's Next

Defensible documentation isn't a charting problem. It's a diagnostic standardization problem. AI-captured, provider-reviewed, timestamped clinical artifacts are more legally defensible than manually entered notes because they're objective, consistent, and auditable.

The CCO's role isn't to mandate better charting. It's to give providers a diagnostic infrastructure that makes defensible documentation the default output of every exam.

Schedule a call to see how Vision AI fits your clinical governance framework and what a pilot at 3 to 5 locations would measure. The DSOs getting ahead of audit exposure and payer denials are not the ones that retrained their providers. They are the ones that changed what the record is built from.

Here's What CCOs Ask Us Most

Is the clinical evidence behind dental AI strong enough that dentists will actually trust it?

Yes, and the threshold question is FDA clearance, not vendor claims. Vision AI is the only dental AI with FDA clearance for both caries detection and bone level quantification, the two diagnostic categories most frequently challenged by payers and most consequential in peer review. In clinical validation, 100% of dentists found more caries with AI, and 91% found more periodontal disease. Dentists who find more with AI are dentists who trust AI.

Does AI-generated documentation increase our legal exposure or reduce provider autonomy?

Neither, because Vision AI doesn't generate autonomous documentation. It captures objective diagnostic artifacts, annotated radiographic findings, bone level measurements, and timestamped images that the provider reviews and approves before they enter the clinical record. The provider remains the author of the clinical decision. Manually entered notes reconstructed from memory are actually more legally vulnerable than AI-assisted records with provider sign-off and a timestamp.

How does this affect our payer denial rate and audit exposure?

Annotated imaging, timestamped findings, and provider-reviewed notes create an audit-ready record that reduces back-and-forth with payers and simplifies peer review because the clinical rationale is embedded in the record, not reconstructed after the fact. The result is 5x faster claims decisions. The real question is not whether AI documentation holds up in an audit, but whether your current documentation standard would survive the same scrutiny.

Will providers actually adopt this, or will it add friction to workflow?

Vision AI integrates into the existing clinical workflow so AI-captured findings surface at the point of diagnosis, not as a separate documentation step. Provider-reviewed notes and annotated imagery are generated as a byproduct of the exam. Adoption is also actively managed: daily utilization tracking with same-day intervention means disengagement is caught and addressed in real time, not discovered at the end of a quarter.

Do we have to commit to full DSO rollout before we know if this works?

No. The pilot-first model starts at 3 to 5 locations and measures real clinical and operational outcomes before any DSO-wide commitment is made. The CCO isn't being asked to champion something unproven across 50-plus locations. The pilot also generates the production-per-practice data the COO needs before expanding.